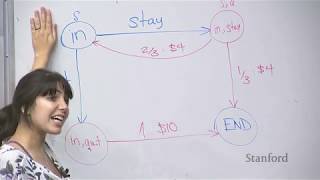

Web Reference: I am looking for a book (or online article (s)) on Markov decision processes that contains lots of worked examples or problems with solutions. The purpose of the book is to grind my teeth on some problems during long commutes. Apr 25, 2017 · A Markov chain is a discrete-valued Markov process. Discrete-valued means that the state space of possible values of the Markov chain is finite or countable. A Markov process is basically a stochastic process in which the past history of the process is irrelevant if you know the current system state. In other words, all information about the past and present that would be useful in saying ... Markov processes and, consequently, Markov chains are both examples of stochastic processes. Random process and stochastic process are completely interchangeable (at least in many books on the subject).

YouTube Excerpt: Deterministic route finding isn't enough for the real world - Nick Hawes of the Oxford Robotics Institute takes us through some ...

Color Profile Overview

Markov Decision Processes Color Trends 2026: Meanings, Combinations, And Trends Explained Color & Biography

style: $51M - $86M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 4, 2026

Color Outlook & Future Earnings

Disclaimer: Disclaimer: Color estimates are based on publicly available data, media reports, and financial analysis. Actual numbers may vary.