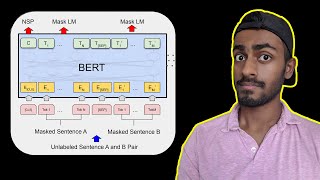

Web Reference: Bidirectional encoder representations from transformers (BERT) is a language model introduced in October 2018 by researchers at Google. [1][2] It learns to represent text as a sequence of vectors using self-supervised learning. It uses the encoder-only transformer architecture. Sep 11, 2025 · BERT (Bidirectional Encoder Representations from Transformers) stands as an open-source machine learning framework designed for the natural language processing (NLP). Oct 11, 2018 · Unlike recent language representation models, BERT is designed to pre-train deep bidirectional representations from unlabeled text by jointly conditioning on both left and right context in all layers.

YouTube Excerpt: Full

Color Profile Overview

Bert Explained Training Inference Bert Color Trends 2026: Meanings, Combinations, And Trends Explained Color & Biography

![Celebrity BERT explained: Training, Inference, BERT vs GPT/LLamA, Fine tuning, [CLS] token Profile](https://i.ytimg.com/vi/90mGPxR2GgY/mqdefault.jpg)

style: $27M - $48M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 5, 2026

Color Outlook & Future Earnings

Disclaimer: Disclaimer: Color estimates are based on publicly available data, media reports, and financial analysis. Actual numbers may vary.